Today, we’re adding new built-in tools to the Responses API—our core API primitive for building agentic applications. This includes support for all remote Model Context Protocol (MCP) servers(opens in a new window), as well as tools like image generation(opens in a new window), Code Interpreter(opens in a new window), and improvements to file search(opens in a new window). These tools are available across our GPT‑4o series, GPT‑4.1 series, and OpenAI o-series reasoning models. o3 and o4-mini can now call tools and functions directly within their chain-of-thought in the Responses API, producing answers that are more contextually rich and relevant. Using o3 and o4-mini with the Responses API preserves reasoning tokens across requests and tool calls, improving model intelligence and reducing the cost and latency for developers.

We’re also introducing new features in the Responses API that improve reliability, visibility, and privacy for enterprises and developers. These include background mode(opens in a new window) to handle long-running tasks asynchronously and more reliably, support for reasoning summaries(opens in a new window), and support for encrypted reasoning items(opens in a new window).

Since releasing the Responses API in March 2025 with tools like web search, file search, and computer use, hundreds of thousands of developers have used the API to process trillions of tokens across our models. Customers have used the API to build a variety of agentic applications, including Zencoder(opens in a new window)’s coding agent, Revi(opens in a new window)’s market intelligence agent for private equity and investment banking, and MagicSchool AI(opens in a new window)'s education assistant—all of which use web search to pull relevant, up-to-date information into their app. Now developers can build agents that are even more useful and reliable with access to the new tools and features released today.

## New remote MCP server support

We’re adding support for remote MCP servers(opens in a new window) in the Responses API, building on the release of MCP support in the Agents SDK(opens in a new window). MCP is an open protocol that standardizes how applications provide context to LLMs. By supporting MCP servers in the Responses API, developers will be able to connect our models to tools hosted on any MCP server with just a few lines of code. Here are some examples showing how developers can use remote MCP servers with the Responses API today:

Shopify Twilio Stripe DeepWiki (Devin)

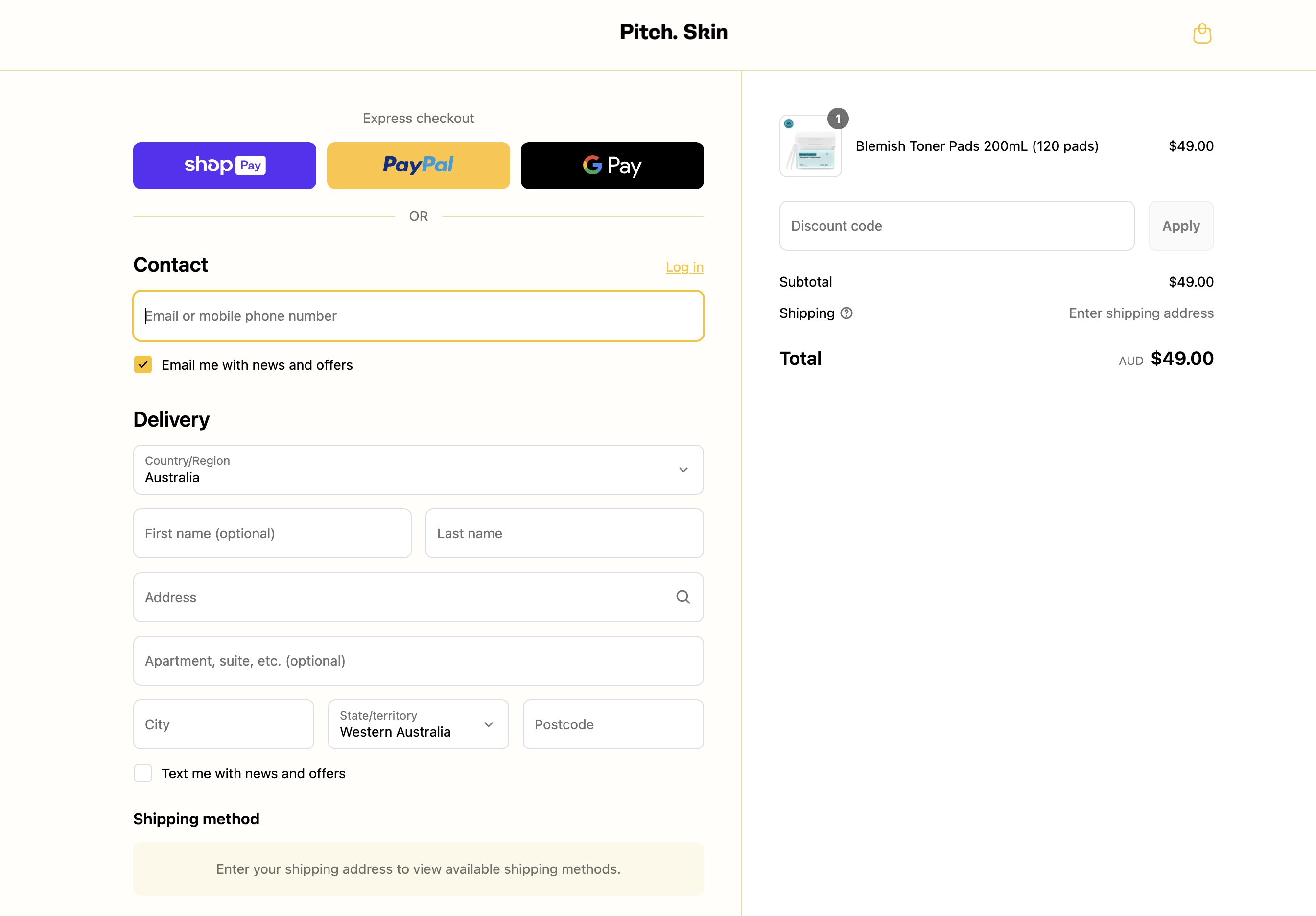

`1response = client.responses.create(2 model="gpt-4.1",3 tools=[{4 "type": "mcp",5 "server_label": "shopify",6 "server_url": "https://pitchskin.com/api/mcp",7 }],8 input="Add the Blemish Toner Pads to my cart"9)`

The Blemish Toner Pads have been added to your cart! You can proceed to checkout here:

Popular remote MCP servers include Cloudflare(opens in a new window), HubSpot(opens in a new window), Intercom(opens in a new window), PayPal(opens in a new window), Plaid(opens in a new window), Shopify(opens in a new window), Stripe(opens in a new window), Square(opens in a new window), Twilio(opens in a new window), Zapier(opens in a new window), and more. We expect the ecosystem of remote MCP servers to grow quickly in the coming months, making it easier for developers to build powerful agents that can connect to the tools and data sources their users already rely on. In order to best support the ecosystem and contribute to this developing standard, OpenAI has also joined the steering committee for MCP.

To learn how to spin up your own remote MCP server, check out this guide from Cloudflare(opens in a new window). To learn how to use the MCP tool in the Responses API, check out this guide(opens in a new window) in our API Cookbook.

## Updates to image generation, Code Interpreter, and file search

With built-in tools in the Responses API, developers can easily create more capable agents with just a single API call. By calling multiple tools while reasoning, models now achieve significantly higher tool calling performance on industry-standard benchmarks like Humanity’s Last Exam (source). Today, we’re adding new tools including:

## New features in the Responses API

In addition to the new tools, we’re also adding support for new features in the Responses API, including:

* Background mode:As seen in agentic products like Codex, deep research, and Operator, reasoning models can take several minutes to solve complex problems. Developers can now use background mode to build similar experiences on models like o3 without worrying about timeouts or other connectivity issues—background mode kicks off these tasks asynchronously. Developers can either poll these objects to check for completion, or start streaming events whenever their application needs to catch up on the latest state. Learn more(opens in a new window).

`1response = client.responses.create(2 model="o3",3 input="Write me an extremely long story.",4 reasoning={ "effort": "high" },5 background=True6)`

* Reasoning summaries: The Responses API can now generate concise, natural-language summaries of the model’s internal chain-of-thought, similar to what you see in ChatGPT. This makes it easier for developers to debug, audit, and build better end-user experiences. Reasoning summaries are available at no additional cost. Learn more(opens in a new window).

`1response = client.responses.create(2 model="o4-mini",3 tools=[4 {5 "type": "code_interpreter",6 "container": {"type": "auto"}7 }8 ],9 instructions=(10 "You are a personal math tutor. "11 "When asked a math question, run code to answer the question."12 ),13 input="I need to solve the equation `3x + 11 = 14`. Can you help me?",14 reasoning={"summary": "auto"}15)`

* Encrypted reasoning items:Customers eligible forZero Data Retention (ZDR)(opens in a new window) can now reuse reasoning items across API requests—without any reasoning items being stored on OpenAI’s servers. For models like o3 and o4-mini, reusing reasoning items between function calls boosts intelligence, reduces token usage, and increases cache hit rates, resulting in lower costs and latency. Learn more(opens in a new window).

`1response = client.responses.create(2 model="o3",3 input="Implement a simple web server in Rust from scratch.",4 store=False,5 include=["reasoning.encrypted_content"]6)`

## Pricing and availability

All of these tools and features are now available in the Responses API, supported in our GPT‑4o series, GPT‑4.1 series, and our OpenAI o-series reasoning models (o1, o3, o3‑mini, and o4-mini). Image generation is only supported on o3 of our reasoning model series.

Pricing for existing tools remains the same. Image generation costs $5.00/1M text input tokens, $10.00 / 1M image input tokens, and $40.00 / 1M image output tokens, with 75% off cached input tokens. Code Interpreter costs $0.03 per container. File search costs $0.10/GB of vector storage per day and $2.50/1k tool calls. There is no additional cost to call the remote MCP server tool—you are simply billed for output tokens from the API. Learn more about pricing(opens in a new window) in our docs.

We’re excited to see what you build!

Our Research * Research Index * Research Overview * Research Residency * OpenAI for Science * Economic Research

Latest Advancements * GPT-5.3 Instant * GPT-5.3-Codex * GPT-5 * Codex

Safety * Safety Approach * Security & Privacy * Trust & Transparency

ChatGPT * Explore ChatGPT(opens in a new window) * Business * Enterprise * Education * Pricing(opens in a new window) * Download(opens in a new window)

Sora * Sora Overview * Features * Pricing * Sora log in(opens in a new window)

API Platform * Platform Overview * Pricing * API log in(opens in a new window) * Documentation(opens in a new window) * Developer Forum(opens in a new window)

For Business * Business Overview * Solutions * Contact Sales

Company * About Us * Our Charter * Foundation * Careers * Brand

Support * Help Center(opens in a new window)

More * News * Stories * Livestreams * Podcast * RSS

Terms & Policies * Terms of Use * Privacy Policy * Other Policies

(opens in a new window)(opens in a new window)(opens in a new window)(opens in a new window)(opens in a new window)(opens in a new window)(opens in a new window)

OpenAI © 2015–2026 Manage Cookies

English United States