This is a guest post by Narendra Kumar, Head of Platform – Data at Razorpay, in partnership with AWS.

In this post, we explore how Razorpay, India’s leading FinTech company, transformed their data platform by migrating from a third-party solution to Amazon EMR, unlocking improved performance and significant cost savings. We’ll walk through the architectural decisions that guided this migration, the implementation strategy, and the measurable benefits Razorpay achieved.

Founded in 2014, Razorpay has become a powerhouse in comprehensive payment solutions, enabling businesses to accept, process, and disburse payments online. With offerings like RazorpayX for business banking and Razorpay Capital for lending solutions, the company has experienced explosive growth, now serving millions of businesses. This rapid expansion brought significant data challenges. When Razorpay’s data platform began straining under the weight of more than 1PB daily processing demands, the engineering team faced a critical decision: continue scaling their existing third-party solution or modernize with a platform offering greater flexibility and control. They chose Amazon EMR to build a comprehensive data architecture spanning batch warehousing, real-time stream processing, and interactive analytics – all running on Apache Spark with open-source Delta Lake for ACID transactions. This wasn’t simply an ETL migration; it was a complete platform transformation that gave Razorpay’s 800 daily users access to more than 60 concurrent streaming pipelines, more than 3,000 orchestrated workflows, and the ability to query 6PB of data daily. The results validated their architectural choices: 11% better overall performance, 21% cost reduction, and the operational flexibility to optimize Spark resource allocation, leverage EC2 Spot instances, and implement advanced features like liquid clustering – all without vendor lock-in.

The data architecture has a data ingestion layer, data processing layer, and data consumption layer. Razorpay ingests more than 20 TB of new data every day, processes more than 1 PB of daily data using more than 60 data stream processing pipelines. This data is then consumed by querying more than 6 PB of daily data through more than 3,000 scheduled workflows.

Data flows from a variety of sources such as online transaction processing (OLTP) databases – traditional transactional or entity stores, events such as clickstream and application events, and third-party events like reverse extract, transform, and load (ETL). Most of the data consumption use cases power merchant reporting and internal analytics of the organization. The architecture powers a variety of data science use cases and financial infrastructure around a reconciliation service.

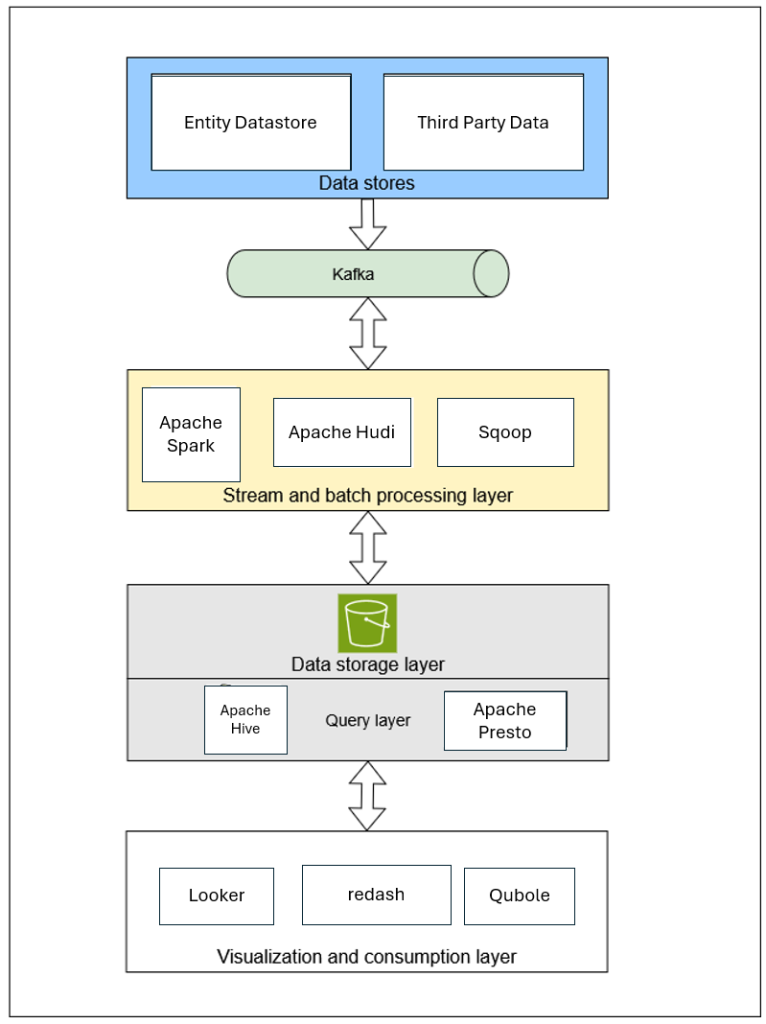

As shown in the following diagram, in its early stages, Razorpay operated on a small scale, using Sqoop to dump transactional data daily into a data lake and managing a Presto layer for querying this data. As they grew, the demand for near real-time data increased, prompting the setup of a change data capture (CDC) collector using Maxwell to stream data manipulation language (DML) events to Kafka. To further enhance data processing, Razorpay built a processing layer that consumed data from Kafka to UPSERT information into the lake using Apache Hudi.

Additionally, the company onboarded data from third-party sources such as Freshdesk and Google Sheets and automated event ingestion from frontend applications using Lumberjack, thereby streamlining their data management processes.

As Razorpay scaled its operations, the demand for multiple real-time use cases became mission-critical, prompting the development of a robust data warehouse ingestion framework to efficiently ingest data into TiDB. To enhance service reliability and support dashboard querying, a low-latency, high-throughput service called Harvester was created, which stored pre-aggregated data for effective monitoring. Over time, reporting use cases emerged, leading to the use of a warehouse service to establish a denormalized report data layer while also exploring a real-time layer for dynamic insights. Additionally, to facilitate a smooth transition to microservices, Razorpay built a unified storage layer capable of supporting data from both its existing monolithic architecture and the new microservices, ensuring seamless integration and improved data accessibility across the organization.

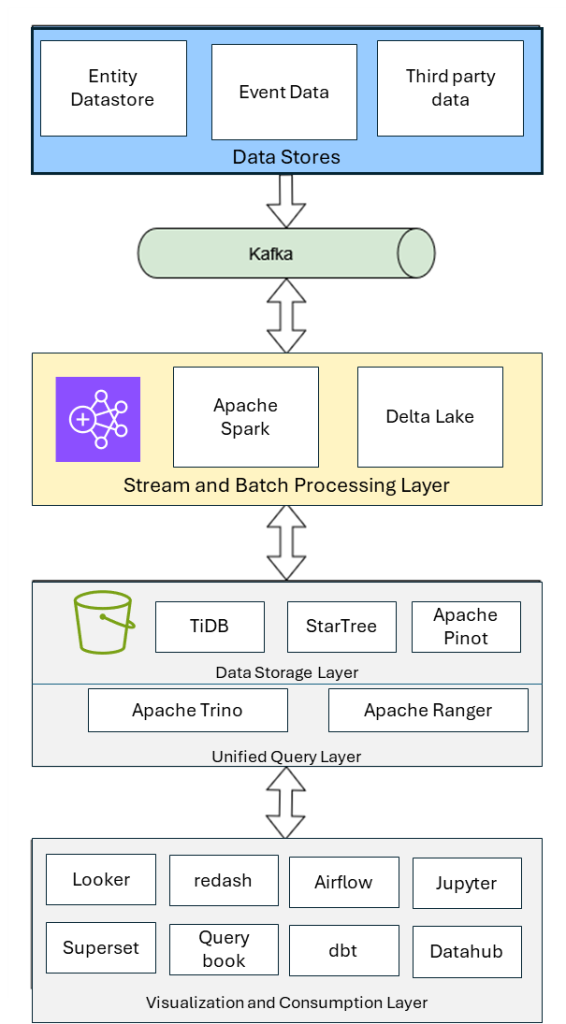

Razorpay implemented a comprehensive data service migration to Amazon EMR using a phased approach. The solution architecture as shown in the following diagram comprises multiple layers handling data ingestion, processing, and consumption.

A modern and scalable analytics platform focuses on real-time data ingestion, petabyte-scale processing, and cost-optimized storage – all orchestrated with robust workflow management:

To handle large-scale and diverse data sources, they implemented a combination of CDC and file ingestion patterns:

Razorpay designed the processing stack on Amazon EMR on Amazon Elastic Compute Cloud (Amazon EC2) with Spark as the primary compute engine

Their data storage follows the medallion architecture pattern layered on an Amazon Simple Storage Service (Amazon S3):

Complex data workflows are automated and monitored using a hybrid orchestration approach:

To ensure cost efficiency and high throughput, the following optimizations were applied:

To enable a secure migration, they implemented Amazon EMR security best practices following AWS guidance on encryption, authentication, and authorization as documented in the Amazon EMR security best practices.

This architecture delivers low-latency ingestion, petabyte-scale processing, and robust workflow orchestration so that analytics teams can derive faster insights while maintaining compliance and optimizing for cost.

The combination of Debezium and Maxwell for CDC, Spark on Amazon EMR, OSS Delta Lake on Amazon S3, and Airflow with dbt has proven to be a scalable and resilient approach for modern data analytics workloads

Throughout their migration to Amazon EMR, Razorpay learned valuable lessons that helped optimize their data platform. We are sharing these insights to help other customers accelerate their own modernization journeys while avoiding common pitfalls.

These optimizations delivered 21% cost savings while supporting 800 daily active users and processing 1 PB of data daily. This enabled Razorpay to invest savings back into product innovation for their merchant customers, demonstrating how technical optimization directly translates to business value.

Razorpay’s migration to Amazon EMR demonstrates how the right data processing platform can transform business outcomes at scale. By achieving 11% better performance, 13-15% faster execution times, and 21% cost savings, EMR enabled Razorpay to build an enterprise-grade data platform that supports 800 daily users, more than 3,000 dashboards, and 10 million monthly queries.

To learn more about building similar data analytics solutions on AWS, check out the following resources.

Documentation:

AWS solutions:

Get started:

Narendra is a senior data platform and engineering leader with deep experience in building and operating large-scale data platforms for high-growth FinTech and SaaS organizations. He has worked across the full data lifecycle, including real-time data ingestion, modern lakehouse architectures, analytics platforms, and ML-ready data systems, with a strong focus on reliability, scalability, and cost efficiency.

Ravi is a principal analytics specialist with experience in driving adoption of modern data architectures, enterprise data lakehouses, and real-time data systems across multiple industry verticals in India including startups and SaaS providers.

Shreshtha is a business and IT transformation leader with deep experience in large-scale cloud migrations, data platforms, and AI-driven innovation. She has led complex Amazon EMR programs, helping enterprises modernize analytics, optimize costs, and realize measurable business value through pragmatic, execution-focused strategies.